Reconfigurable Dataflow Unit (RDU)

Delivering fast and energy-efficient inference

Introducing the SN50

Purpose-built for agentic inference, our fifth-generation chip, the SN50, is the only chip to deliver the speed and throughput required for agentic AI.

Built on the Dataflow Architecture, the SN50 delivers the best tokens per watt with 5X more compute and 4X more network bandwidth than our fourth-generation SN40.

Learn moreHeadline here

From chips to racks

The combination of 16 SN40L RDUs creates a single, high-performance rack that can run the largest models, such as DeepSeek R1 671B and Llama 4 Maverick, with the fast inference. These racks can be seamlessly integrated into any existing air-cooled data center.

Learn more →

Seamlessly achieve high performance

From chips to racks

The combination of 16 SN40L RDUs creates a single, high-performance rack that can run the largest models, such as DeepSeek R1 671B and Llama 4 Maverick, with fast inference. These racks can be integrated seamlessly into any existing air-cooled data center.

Learn more →

Solving AI’s data movement problem

Data movement is the most expensive operation when running AI. SambaNova RDUs are designed to solve this problem by using an architecture that creates an assembly line process on the RDU chip.

Our Dataflow Architecture moves data seamlessly from operation to operation, saving power and time when processing the largest of models.

Learn more →Tiered memory supports the largest models

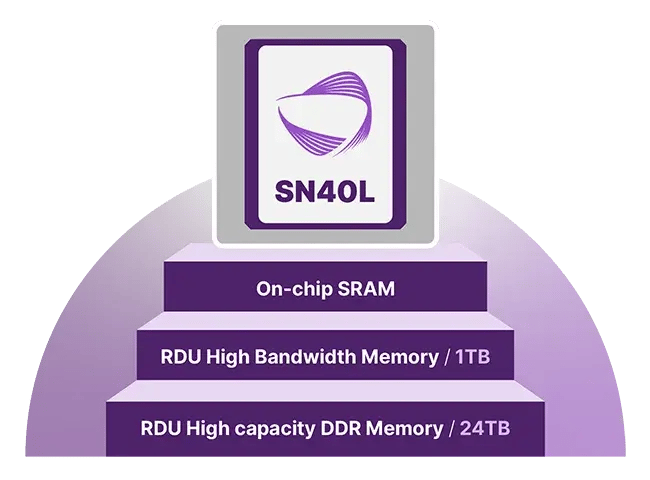

RDUs employ a unique three-tier memory architecture that enables scaling to the largest LLMs. The infrastructure can scale to support running and switching between multiple models in milliseconds.

Learn more →.jpg?width=1600&height=900&name=Chart%20-%20Gen%20speed%20VS%20Gen%20Throughput%20-%20Llama%203.3%2070B%20-%20v3%20(1).jpg)

The best speed and throughput in the Goldilocks Zone

SambaNova RDUs deliver low latency with high throughput, resulting in better tokenomics for use cases like AI coding agents that require near-real time inference.

Learn more →Energy-efficient AI inference

Our dataflow architecture delivers extraordinary performance without the overhead of moving data back and forth between memory like GPUs.

Our fourth-generation chip, the SN40, delivers fast inference with an average of just 10 kWh, allowing SambaRack systems to be air cooled.

Learn more →

From chips to racks

SambaNova RDUs combine to create a single platform that can run the largest models. The fifth-generation SN50 RDUs can scale up to 256 chips across multiple racks and run models that are up to 10 trillion parameters in size and with a context length of up to 10 million tokens.

With the RDU as the heart of SambaRack, these systems can be seamlessly integrated into existing air-cooled data centers.

Built for cloud scale

New with the SambaNova SN50 chips is a scaleout network of up to 32K RDUs. This enables huge cloud-scale inference services making it the ideal solution for inference service providers, like neo-cloud providers and hyperscalers.

Learn more →

Dataflow architecture

Our innovative compute and memory chip layout enables seamless dataflow between operations when processing AI models. This approach results in high-speed data traffic and significant gains in performance and efficiency.

Learn more →

Three-tier memory for efficiency

The SN40L design enables multiple models to run in memory and switch models in microseconds. This unique layout enables SambaNova to scale to the largest models, like DeepSeek and Llama 4 — all on a single rack.

Learn more →Related resources

SambaNova Launches First Turnkey AI Inference Solution for Data Centers, Deployable in 90 Days

Choose the right RDU for your organization

Future-proof your infrastructure

Our fourth-generation RDU SN40 and fifth-generation SN50 are the heart of the SambaNova solution platform.

Speed

RDUs are the only solution that run the largest AI models on a single system with blazing fast performance.

Learn more →

Energy

RDUs deliver the highest tokens per kilowatt-hour, which is ideal for data centers of all sizes.

Learn more →

Agentic

Three-tier memory architecture enables multiple models to run while switching between them. Perfect for AI agents.

Learn more →FAQs

The SN50 RDU (Reconfigurable Dataflow Unit) is SambaNova’s fifth-generation AI inference processor, designed specifically for large-scale, agentic workloads. It uses its unique Dataflow technology and three-tier memory architecture to reduce data movement, enabling faster inference, lower latency, and improved energy efficiency compared to traditional accelerator designs.

GPUs are general-purpose accelerators designed to handle a wide range of compute workloads, primarily for training. The SambaNova RDU is purpose-built for inference and uses Dataflow architecture and three-tier memory architecture that maps model execution directly onto the processor, minimizing data movement to memory, which is the most expensive component for AI inference.

The SN50 is the latest generation of SambaNova’s RDU, offering higher compute performance, increased network bandwidth, and improved scalability compared to the SN40. While the SN40 is well-suited for existing inference deployments and power-constrained environments, the SN50 is designed for large-scale, agentic AI workloads. It enables faster token generation, better system throughput, and more efficient multi-model execution.

The SN50 supports a wide range of inference-heavy AI workloads that require low latency, high throughput, and efficient memory usage. These include AI agents, coding assistants, enterprise copilots, conversational AI, retrieval-augmented generation (RAG), and model hosting platforms. It is particularly well-suited to agentic workflows involving multi-step reasoning, tool usage, and frequent model switching.

Yes, the SN50 is designed to run multiple models simultaneously using its tiered memory architecture. This allows models to remain resident in memory and be switched quickly with minimal latency. The capability is especially important for agentic workloads that rely on multiple models across task steps, improving responsiveness, utilization, and overall inference efficiency.

The SN50 scales to large models through a combination of memory architecture and distributed deployment. Multiple racks can be interconnected to form inference clusters, enabling support for larger models, higher concurrency, and predictable performance at scale.