Multi-Agent AI Workflows with CrewAI on SambaNova

Multi-Agent AI Workflows with CrewAI on SambaNova

January 15, 2025

AI 2025 Predictions: 9 Key Trends Shaping the Future of AI

AI 2025 Predictions: 9 Key Trends Shaping the Future of AI

January 7, 2025

Qwen QwQ Now on SambaNova Cloud - Try 32B Preview

Qwen QwQ Now on SambaNova Cloud - Try 32B Preview

December 18, 2024

Meta Llama 3.3 70B Now Available Today for Developers and Enterprises

Meta Llama 3.3 70B Now Available Today for Developers and Enterprises

December 11, 2024

The SambaNova Startup Accelerator: Helping AI Innovators Realize Their Vision

The SambaNova Startup Accelerator: Helping AI Innovators Realize Their Vision

December 10, 2024

Run Qwen 2.5 32B-Coder on SambaNova Cloud - 5X GPU Speed

Run Qwen 2.5 32B-Coder on SambaNova Cloud - 5X GPU Speed

December 6, 2024

How Gradio Makes Building Apps on SambaNova Cloud Super Easy

How Gradio Makes Building Apps on SambaNova Cloud Super Easy

December 4, 2024

Hugging Face Makes it Faster to Review Papers with SambaNova

Hugging Face Makes it Faster to Review Papers with SambaNova

December 4, 2024

Zilliz: Powering AI RAG Applications with Vector Embeddings

Zilliz: Powering AI RAG Applications with Vector Embeddings

December 3, 2024

Outperforming GPT-4o with Llama 3 8B: Domain Specific Fine Tuning for RAG

Outperforming GPT-4o with Llama 3 8B: Domain Specific Fine Tuning for RAG

November 20, 2024

Oak Ridge National Laboratory Deploys SambaNova Suite, Enabling Energy-Efficient AI Inference for Science

Oak Ridge National Laboratory Deploys SambaNova Suite, Enabling Energy-Efficient AI Inference for Science

November 18, 2024

Texas Advanced Computing Center Deploys SambaNova Suite, Enabling AI Inference for Science

Texas Advanced Computing Center Deploys SambaNova Suite, Enabling AI Inference for Science

November 18, 2024

Argonne National Laboratory Deploys SambaNova Suite to Advance AI Inference In Science Research

Argonne National Laboratory Deploys SambaNova Suite to Advance AI Inference In Science Research

November 18, 2024

Correcting Common AI Benchmarking Errors with AI Starter Kits

Correcting Common AI Benchmarking Errors with AI Starter Kits

November 11, 2024

Accelerating Coding with SambaNova Cloud

Accelerating Coding with SambaNova Cloud

October 10, 2024

Developer Tips: Creating Valuable AI

Developer Tips: Creating Valuable AI

October 3, 2024

Replacing the Judge: Can Llama 405B Outperform GPT4 in the Court of AI?

Replacing the Judge: Can Llama 405B Outperform GPT4 in the Court of AI?

September 19, 2024

Judging Judges: All that is LLM Judgements does not glitter

Judging Judges: All that is LLM Judgements does not glitter

September 19, 2024

Fastest AI Inference with Top Open Models - SambaNova Cloud

Fastest AI Inference with Top Open Models - SambaNova Cloud

September 11, 2024

Advanced AI Apps Need Fast Inference. SambaNova Cloud Delivers It

Advanced AI Apps Need Fast Inference. SambaNova Cloud Delivers It

September 10, 2024

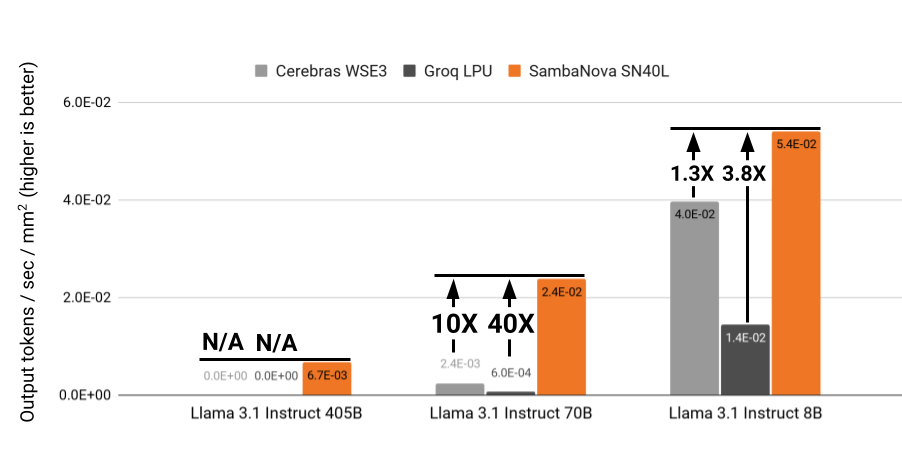

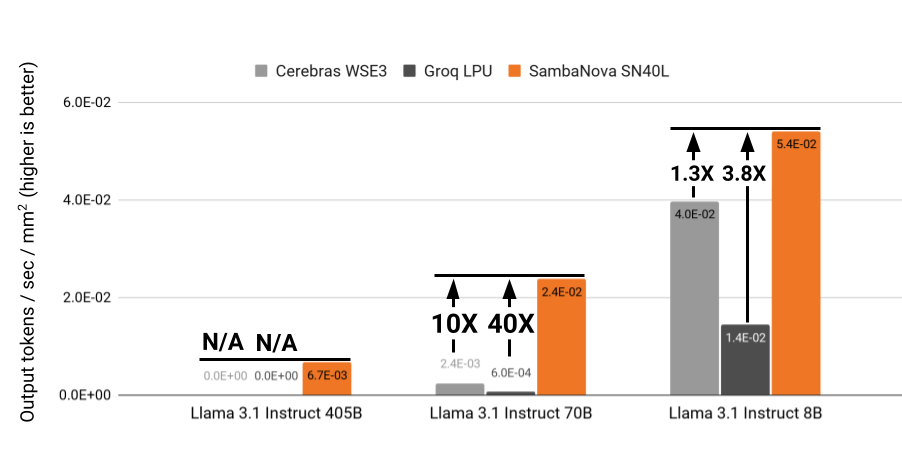

Why SambaNova's SN40L Chip Is the Best for Inference

Why SambaNova's SN40L Chip Is the Best for Inference

September 10, 2024

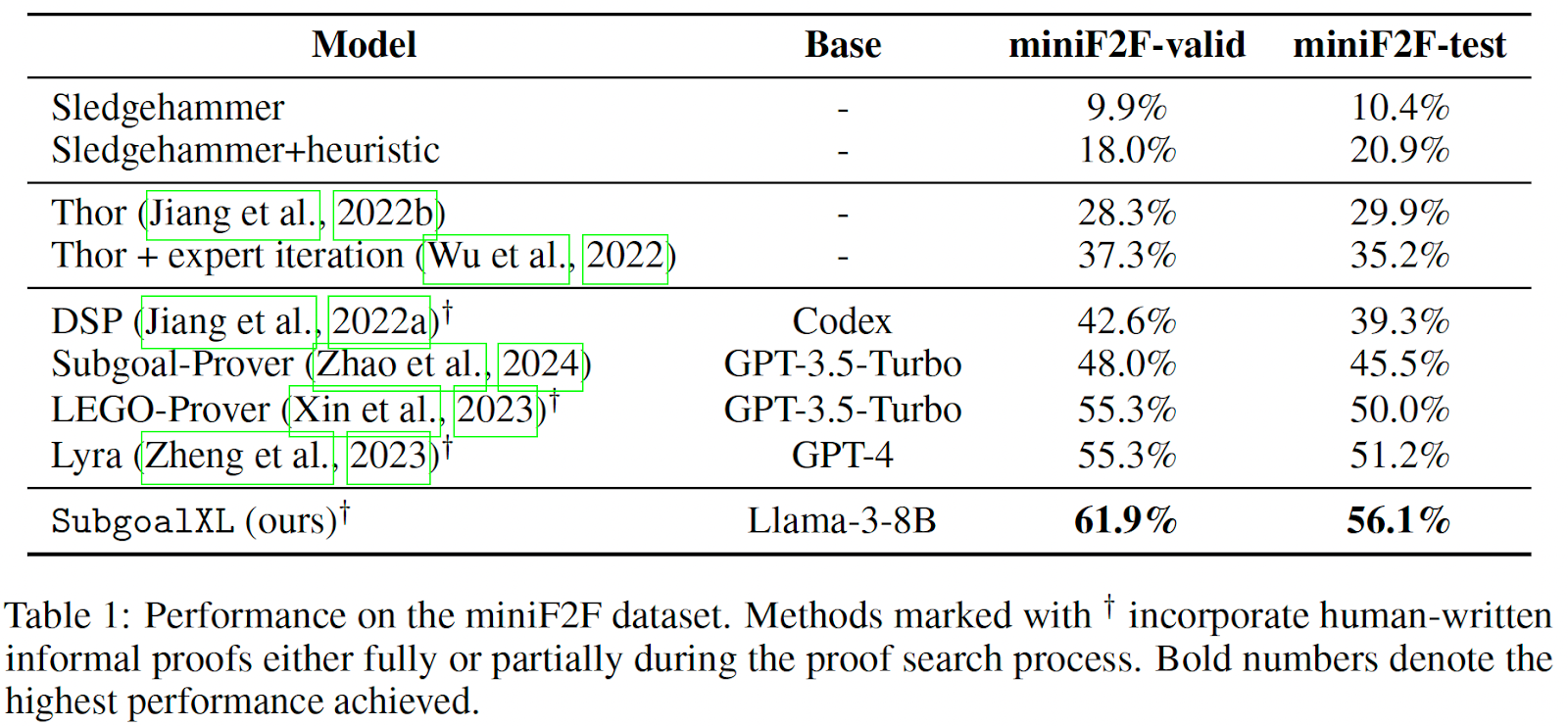

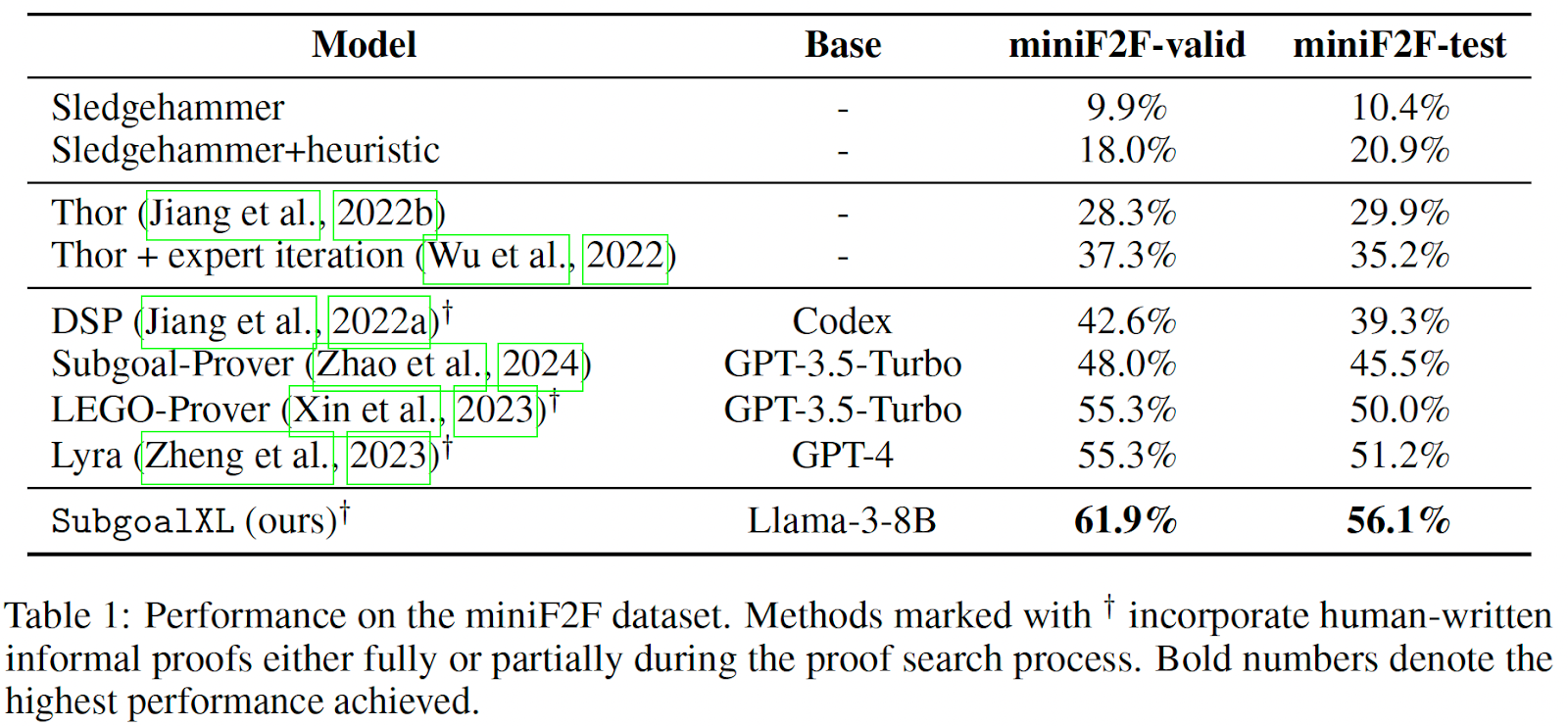

SubgoalXL: Pushing the Boundaries of LLM in Formal Theorem Proving

SubgoalXL: Pushing the Boundaries of LLM in Formal Theorem Proving

September 3, 2024

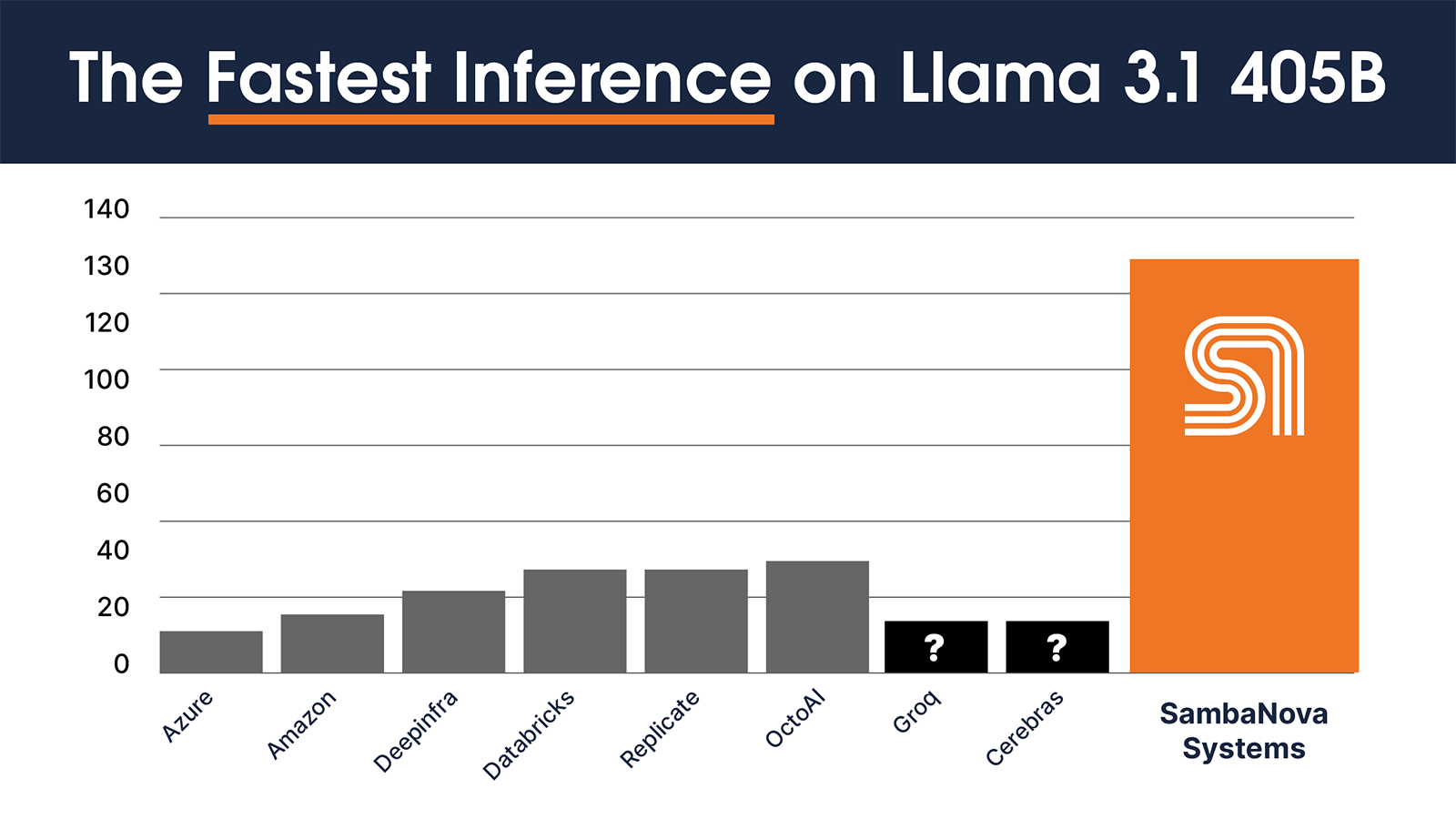

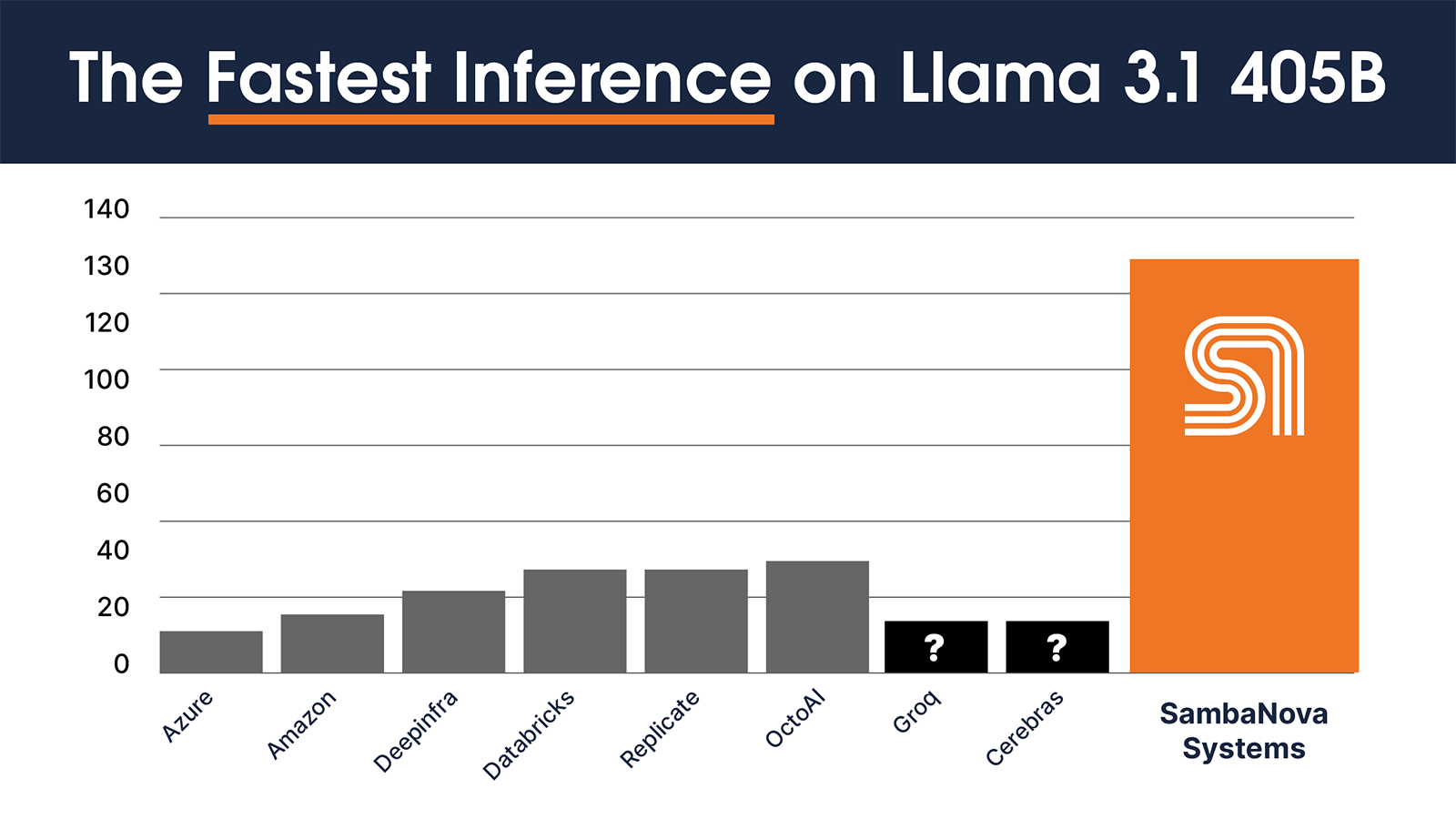

SambaNova Holds Speed Record on Llama 3.1 405B - 4X faster than the rest

SambaNova Holds Speed Record on Llama 3.1 405B - 4X faster than the rest

July 29, 2024

Three Predictions for the Upcoming Llama 3 405B Announcement

Three Predictions for the Upcoming Llama 3 405B Announcement

July 19, 2024